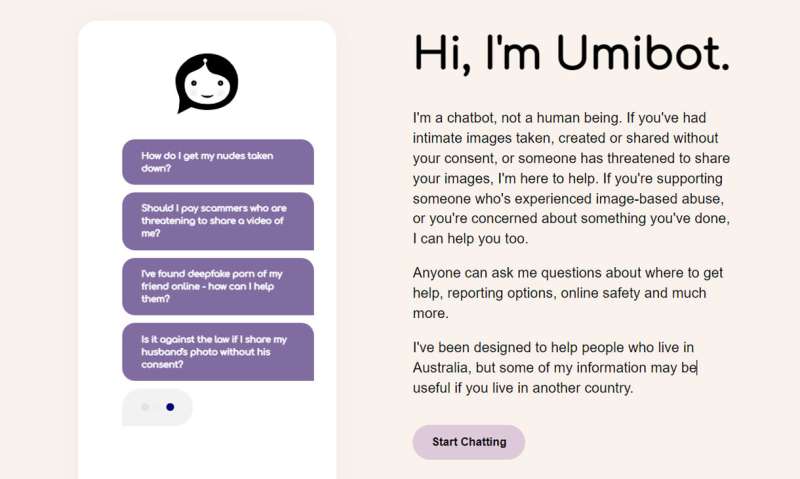

A chatbot is coming against image-based abuse

- December 1, 2022

- 0

Image-based harassment – when someone takes, posts or threatens to share nude, semi-naked or sexually explicit images or videos without permission – is a growing problem faced by

Image-based harassment – when someone takes, posts or threatens to share nude, semi-naked or sexually explicit images or videos without permission – is a growing problem faced by

Image-based harassment – when someone takes, posts or threatens to share nude, semi-naked or sexually explicit images or videos without permission – is a growing problem faced by 1 in 3 Australians surveyed in 2019.

Professor Nicola Henry of RMIT’s Center for Social and Global Studies, the principal researcher behind the creation of “Umibot,” said “deepfake” content (fake videos or AI-generated images) are situations where people are forced to create sexual content. sending unsolicited sexual messages. Images or videos are also considered image-based violations.

“This is a major breach of trust designed to embarrass, punish or humiliate. Abusers often use force and control others,” said Henry, Member of the Australian Research Council. “Many of the victims we spoke with want the problem to go away and the content removed or removed, but often they don’t know where to turn for help.”

That’s what this pilot chatbot is for.

The idea came to Henry after interviewing survivors about their experiences of image-based harassment. Although people he spoke to had different experiences, Henry said they often didn’t know where to turn for help, and some didn’t know what had happened to them was a crime.

“Victims we interviewed said they were often blamed and shamed by their friends, family members and others, which made them even more reluctant to seek help,” Henry said.

Dr Alice Witt, an RMIT researcher who worked with Henry on the project, said the Umibot cannot replace human support, but is designed to help people navigate complex paths and give them advice on reporting options and evidence-gathering. Internet Safety.

“It’s not just for survivors,” Witt said. “Umibot was designed to assist eyewitnesses and even criminals as a potential tool to prevent this abuse.”

How does Umibot work?

Users can enter questions for the Umibot or select answers from a range of options. Umibot also asks users to identify if they are 18 years of age or younger and whether they need help for themselves, help for someone else, or have concerns about something they are doing. This will provide information on what support and information they will receive based on their experience.

Henry says Umibot is the first robot of its kind dedicated to victims of image-based abuse.

“There are other chatbots online that help more broadly people who are exposed to various harms, but they do not focus on image-based abuse and do not have the same hybrid functionality that allows users to enter questions into a chatbot,” Henry said.

A new approach to chatbot design

Henry and Witt teamed up with Melbourne digital agency Tundra to create Umibot using Amazon Lex, an artificial intelligence service for building natural language chatbots.

“We know that victims of video-based violence face a wide variety of experiences beyond video-based violence, so we designed Umibot as a fully inclusive, trauma-based empowerment tool to support people with diverse experiences and backgrounds in life,” said Henry. .

The team also worked with several consultants and conducted an independent accessibility audit to ensure Umibot meets global accessibility standards as closely as possible.

“Our main ethical challenge was to ensure that the Umibot was not harmed or injured or caused the user to feel a burden,” Witt said. “Most survivors aren’t ready to talk to a human about their experiences, so teaching Umibot to be responsive and helpful is one way for them to seek support without any pressure.”

Next steps for Umibot

As Umibot is now available, researchers hope to develop Umibot version 2 for image-based victims, bystanders, and perpetrators over the next few years.

“We hope that Umibot will not only empower victims to find support, but will also help us create ‘best practice’ guidelines for the design, development and implementation of digital tools and interventions to address online harm more broadly,” Witt said.

Source: Port Altele

John Wilkes is a seasoned journalist and author at Div Bracket. He specializes in covering trending news across a wide range of topics, from politics to entertainment and everything in between.