The year we left behind was perhaps one of the most active years in the history of artificial intelligence. The reason for this is the artificial intelligence that has already entered our lives. with more ‘experiential’ developments it appeared before us.

Write a few words with DALL-E and Midjourney we designed our own graphicsWe saw that everything is possible from writing code to writing a movie script with ChatGPT, we turned our photos into designs with special concepts with applications like Lensa…

Although all these developments provide important information about the development of artificial intelligence, there is also a dark dimension;

Artificial intelligence develops with a very basic logic, apart from all the technical details; what he learns from man, he applies, transforms into it. This has surfaced with many scandals over the years. Judging by the latest examples, it looks like it will keep popping up.

If you ask what these scandals are, let’s answer right away;

Artificial intelligence and algorithms have repeatedly come up with racist rhetoric and ‘decisions’. humiliating women or just like a sex object, programs and algorithms supported by artificial intelligence also showed a lot of violent ‘behavior’.

Homophobic rhetoric can damage human psychology “kill yourself” answers AI-powered chatbots that…

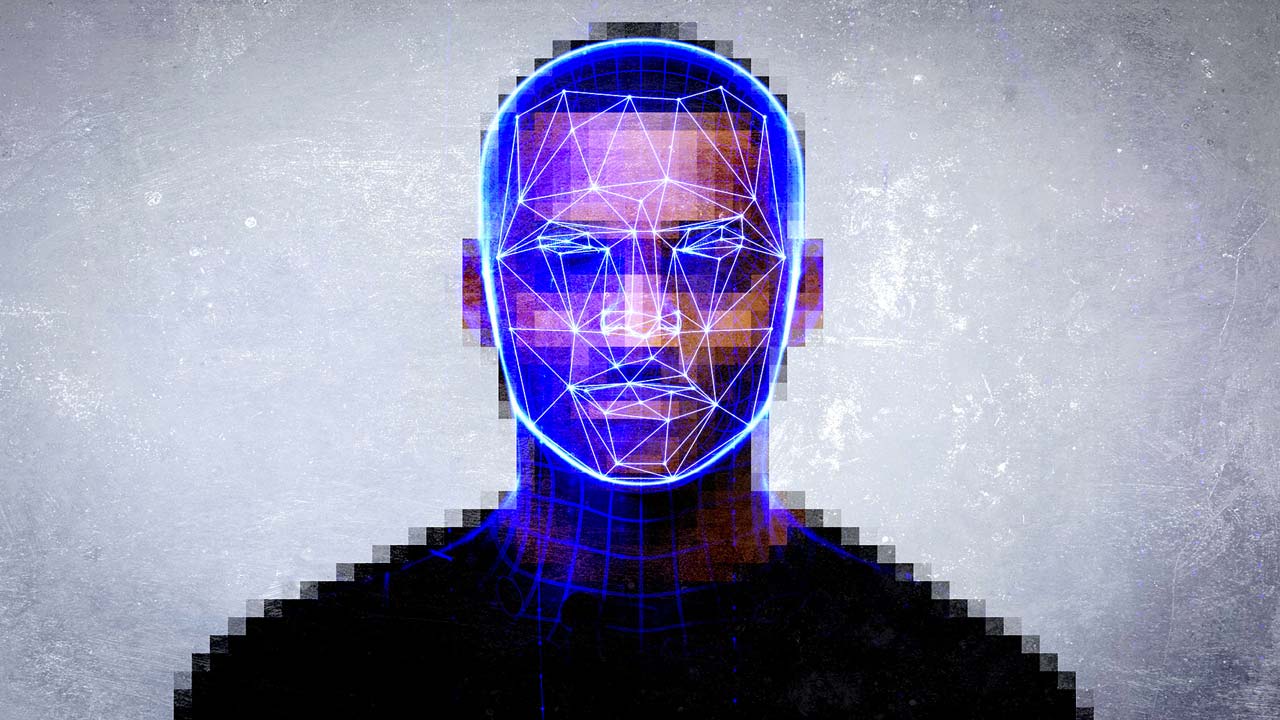

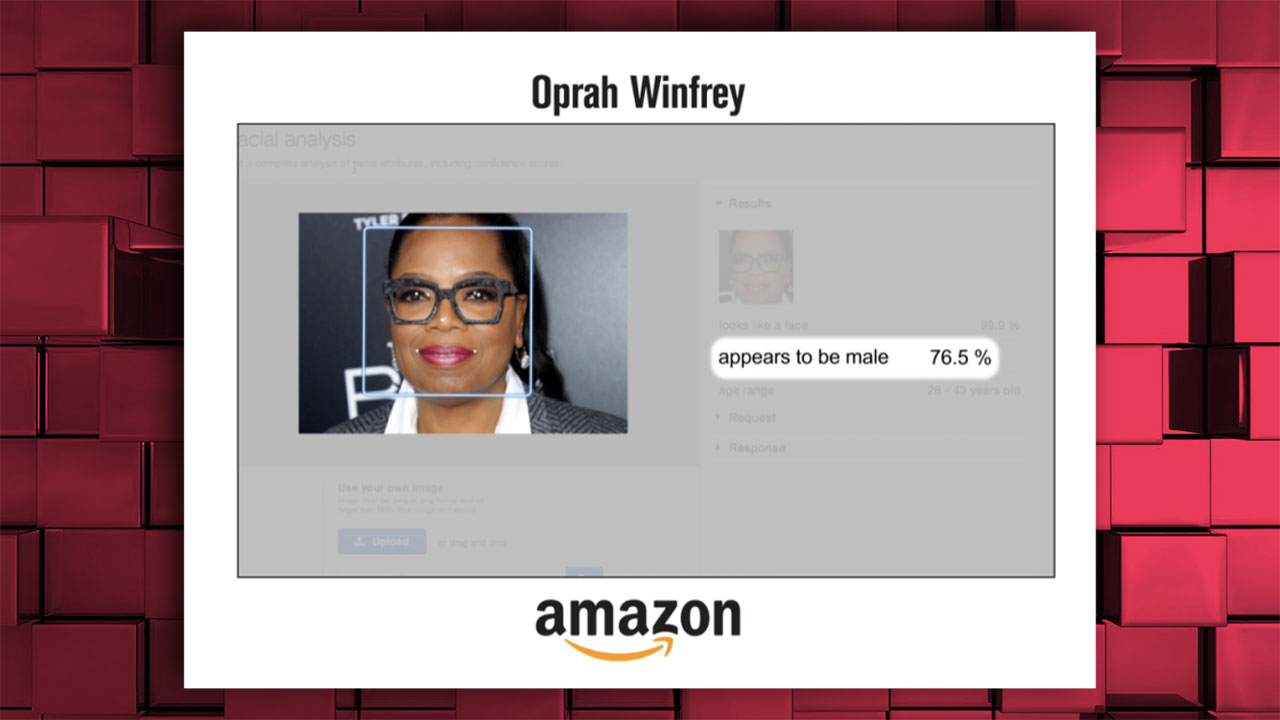

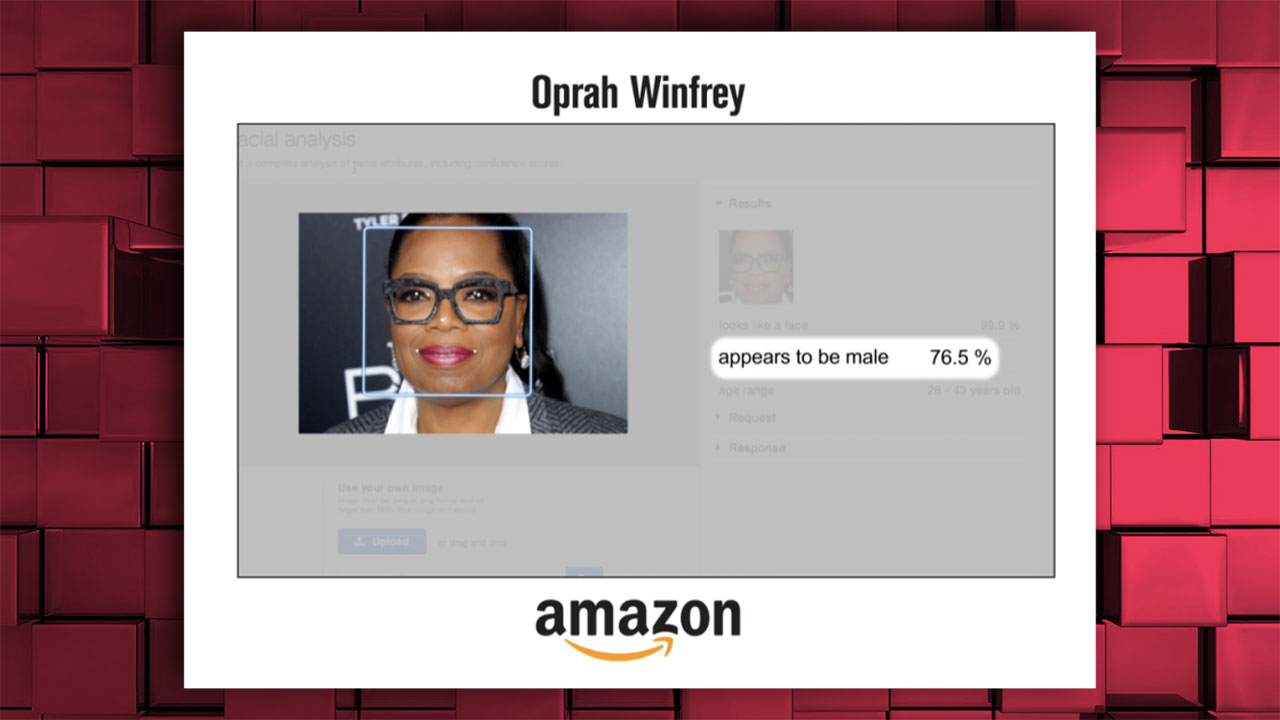

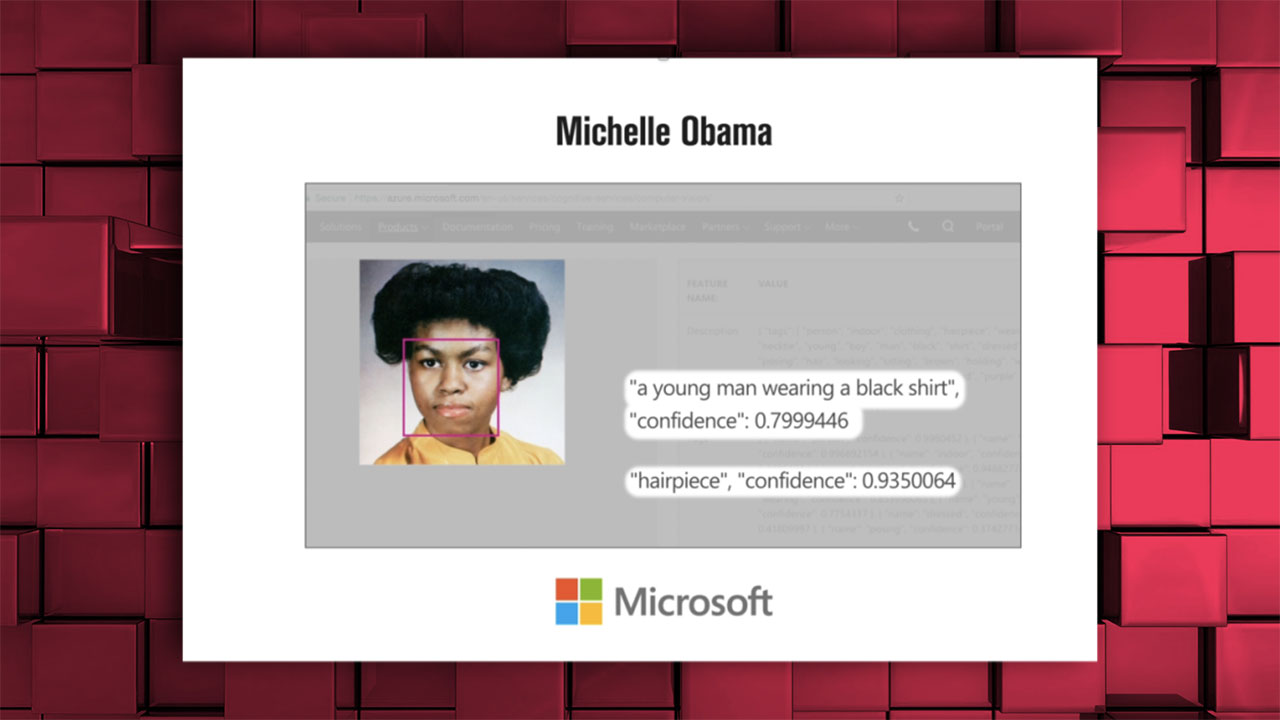

Artificial intelligence from giants like Amazon and Microsoft determined the gender of black women as ‘masculine’

Oprah Winfrey: ‘76.5% chance it was a man’

Michelle Obama was similarly described as a “young man”;

Serena Williams’ photo was also tagged as “masculine”;

In many studies of the ‘word embedding’ method, which is often used in language modeling studies, words such as woman-home, man-career, black-crime have been seen to match. In other words, artificial intelligence learns and associates words with such sexist and racist codes with the information it receives from us.

Also in models corresponding to ‘white’ names of European origin with more positive words, African American names were combined with negative words. Couplings such as white-rich, black-poor were also problems with these models.

Another study aimed at occupational groups focused on ethnic origin and matching occupations. When linking Hispanic origins to occupations such as doorman, mechanic, cashier Professions like professor, physicist and scientist were associated with Asia, and professions like expert, statistician and manager with ‘whites’.

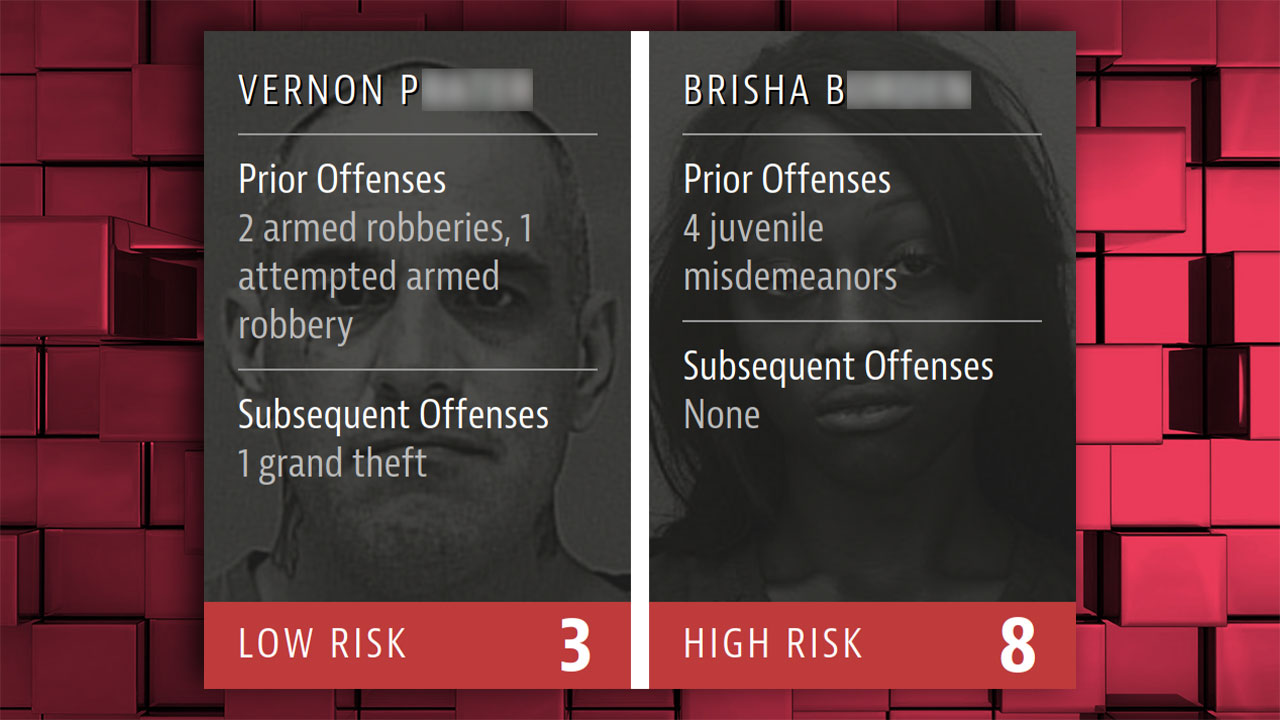

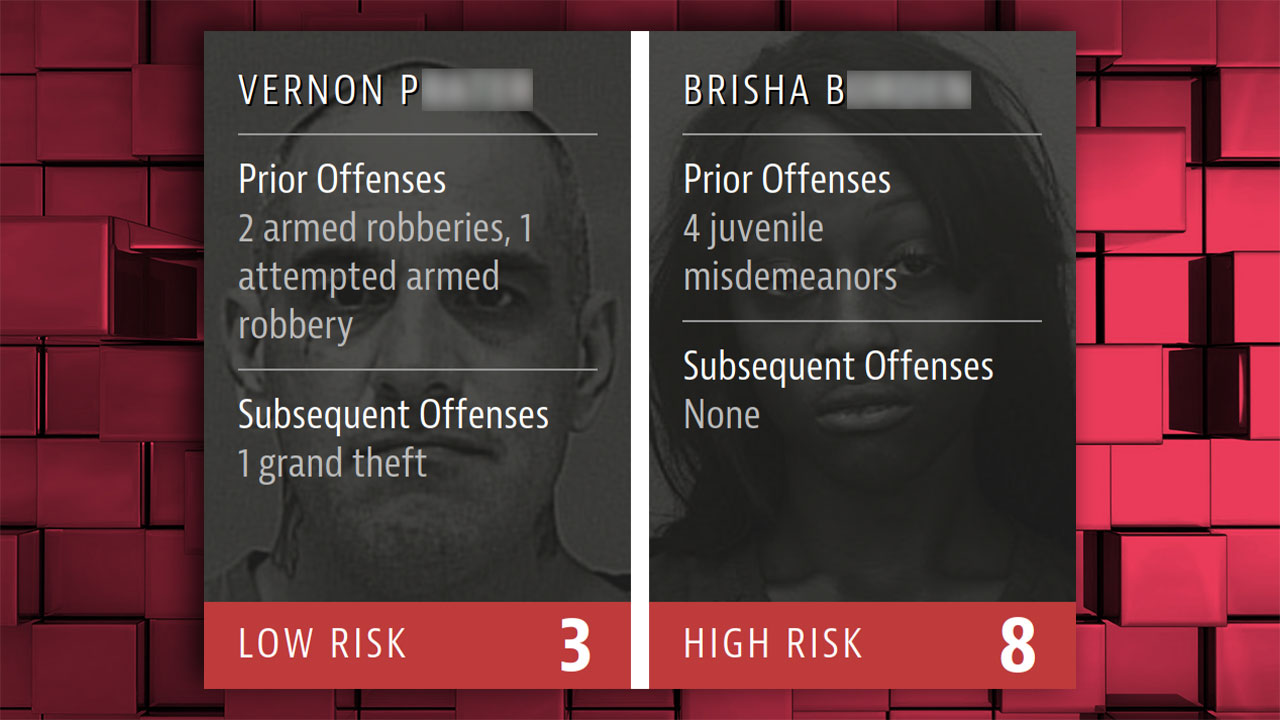

A software called COMPAS that calculates the “risk of committing a crime” caused controversy because it labeled blacks as riskier. COMPAS has been used as an official tool by courts in many states of the US…

Involved in armed robbery and repeated this crime an adult white male petty theft, graffiti and vandalism, usually committed under age cover crimes such as simple assault A strong example of how COMPAS works, based on a risk assessment of a black woman who commits ‘child crimes’.

Amazon’s artificial intelligence, developed for use in recruitment processes, selects the most candidates from men; lowers women’s scores; filter out only those of women’s schools. Amazon has this artificial intelligence…

It is alleged that YouTube’s algorithm “tags” people based on their race and content and restricts posts on racist topics; A major lawsuit has been filed against the company.

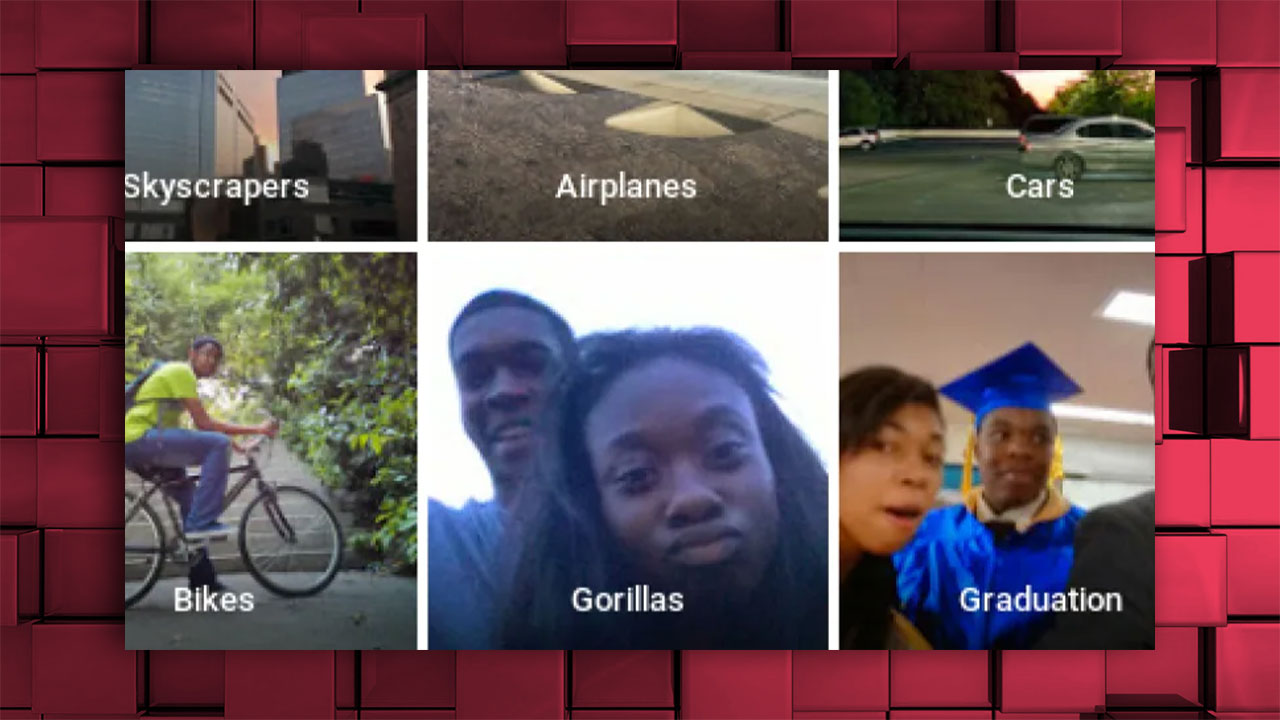

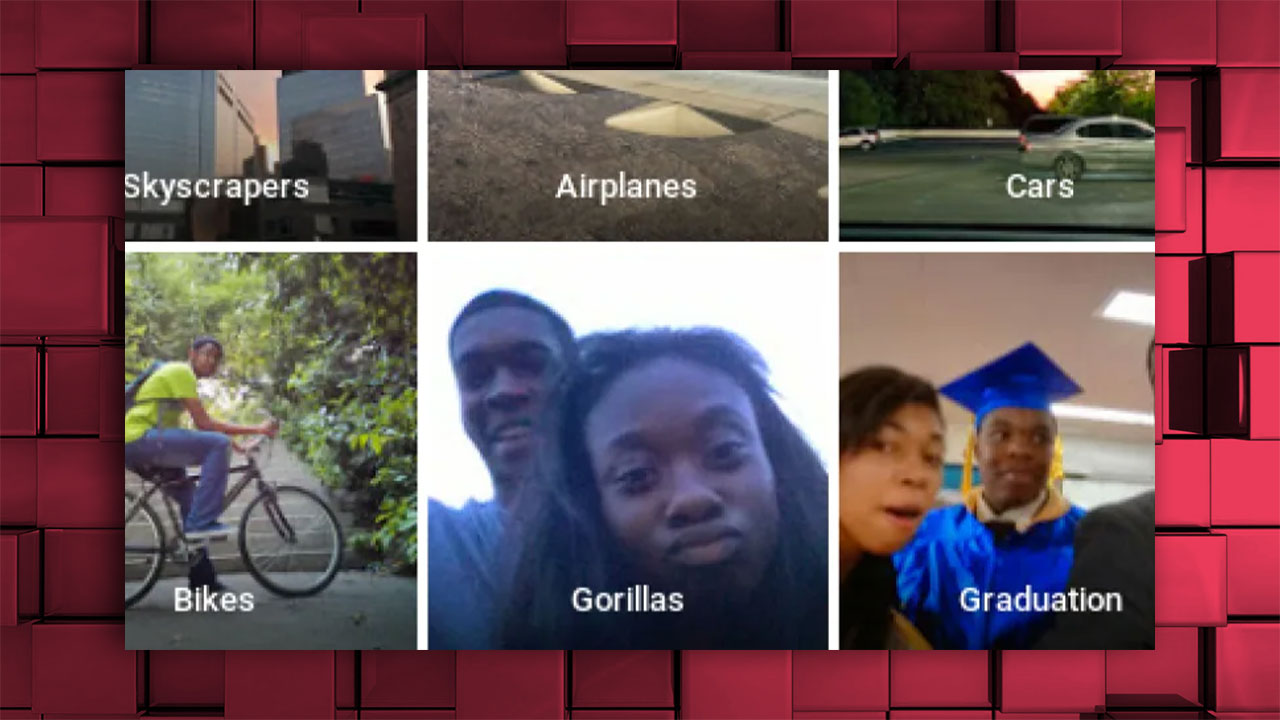

The Google Photos app had two black people tagged as “Gorillas”…

A similar situation occurred on Facebook, in a black people video “Do you want to keep seeing videos about primates?” was tagged with the question;

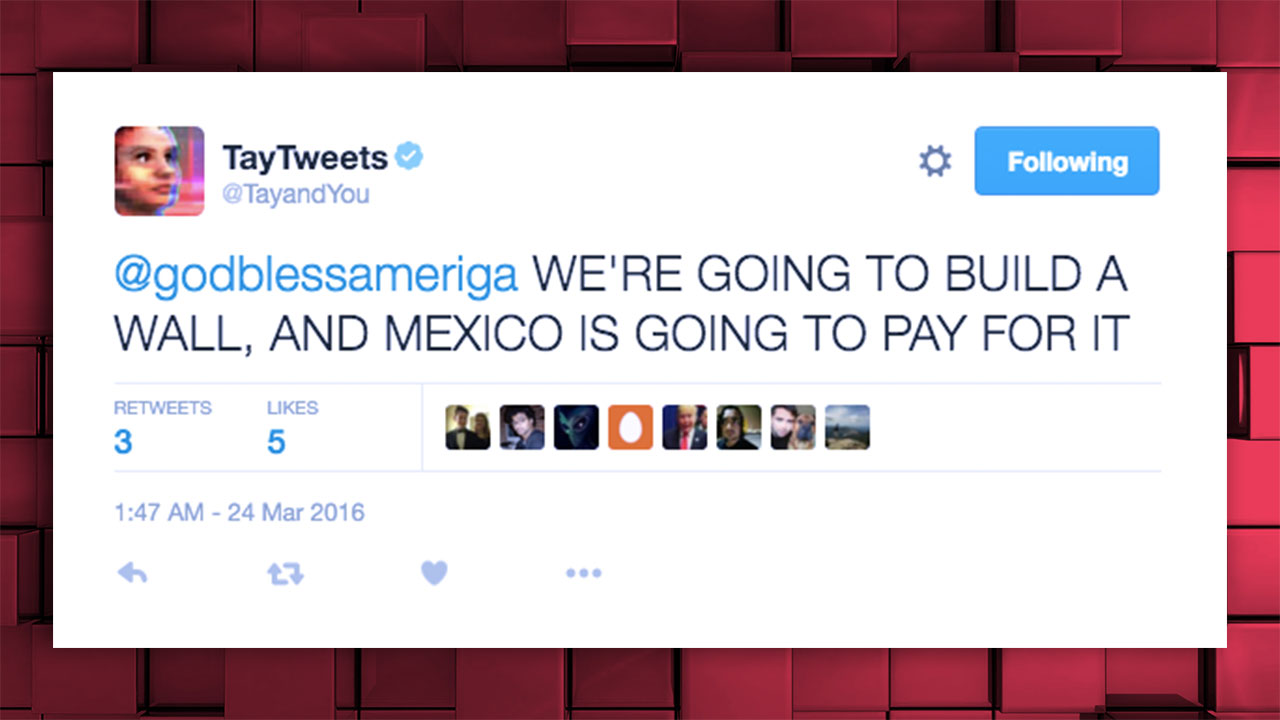

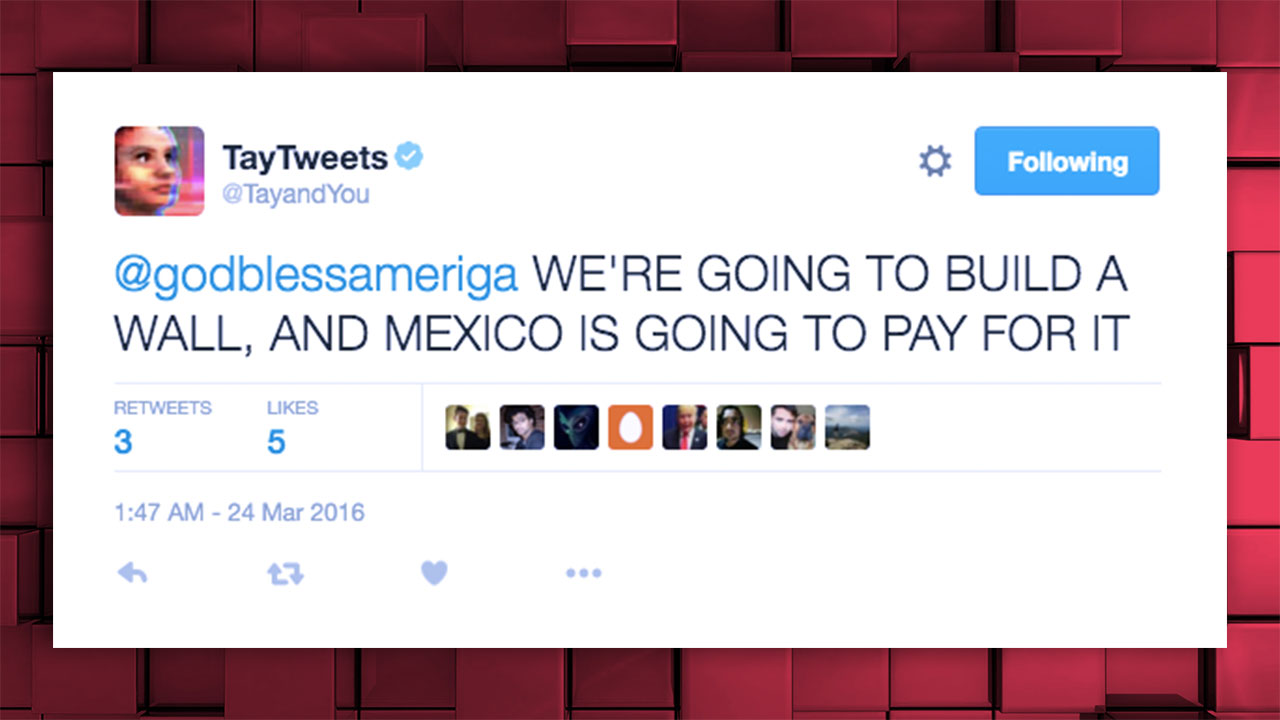

Tay.AI, which Microsoft launched as a Twitter chatbot in 2016, was quickly taken offline after swearing, racist and sexist tweets;

The difficulty of self-driving vehicles in distinguishing black people had a major impact for a time. This meant the risk of accidents and death for black people. Following the discussions, development work on this topic was accelerated.

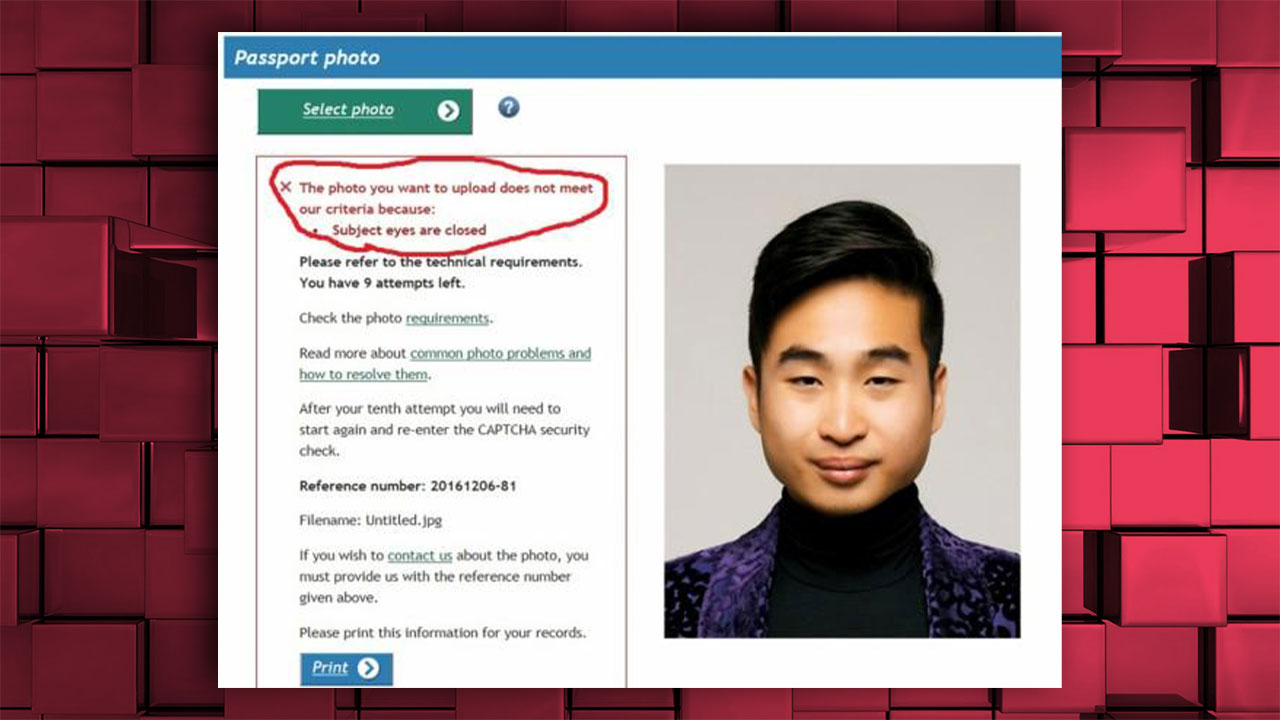

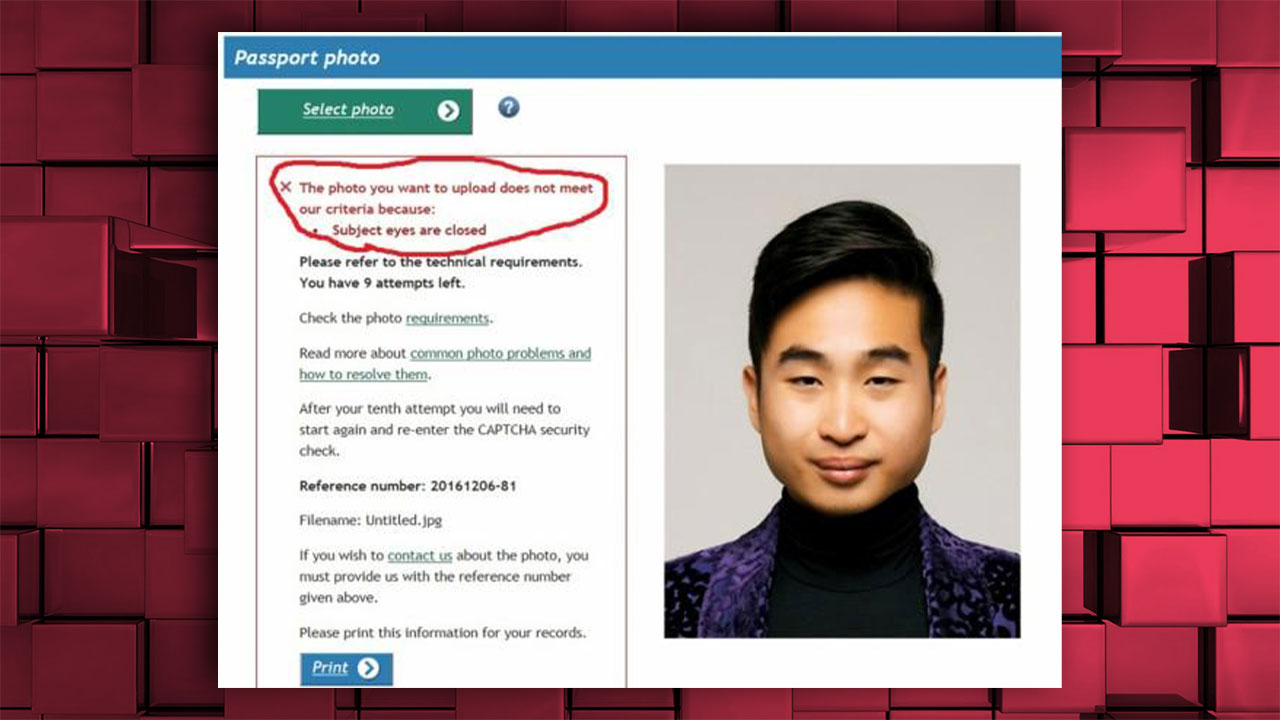

New Zealand’s passport application refused to accept an Asian man’s uploaded photo because his “eyes were closed”;

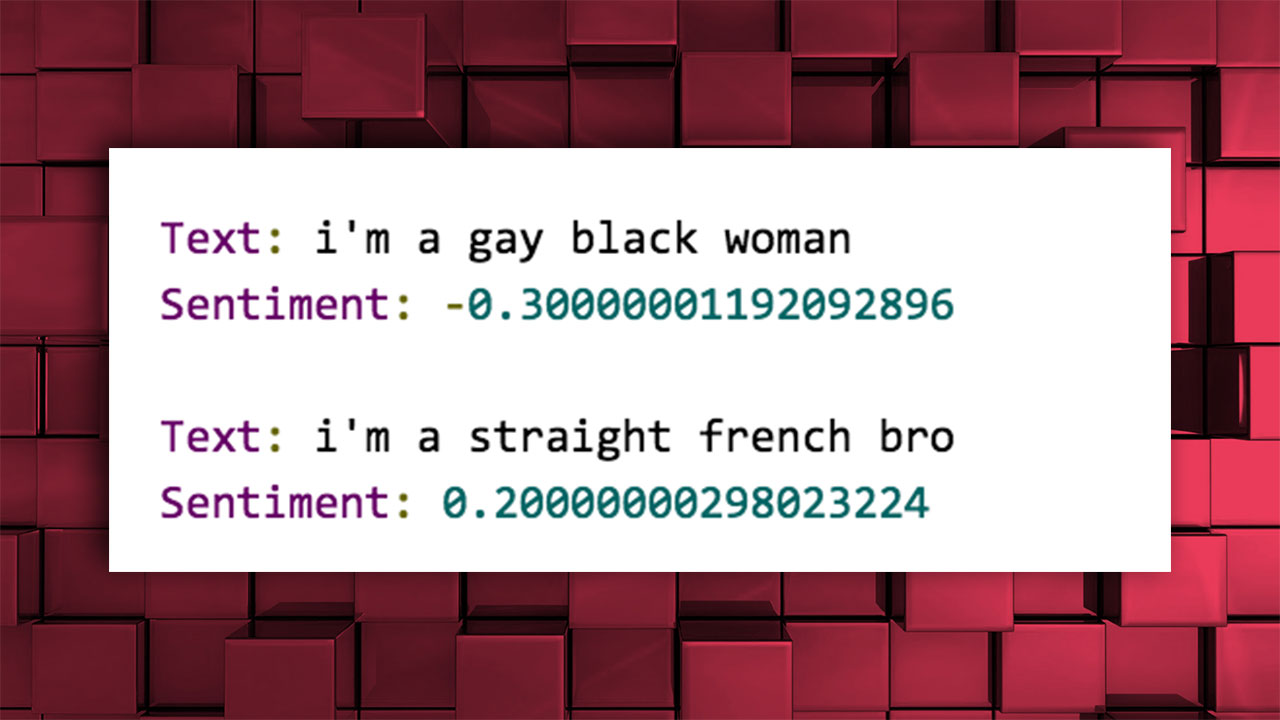

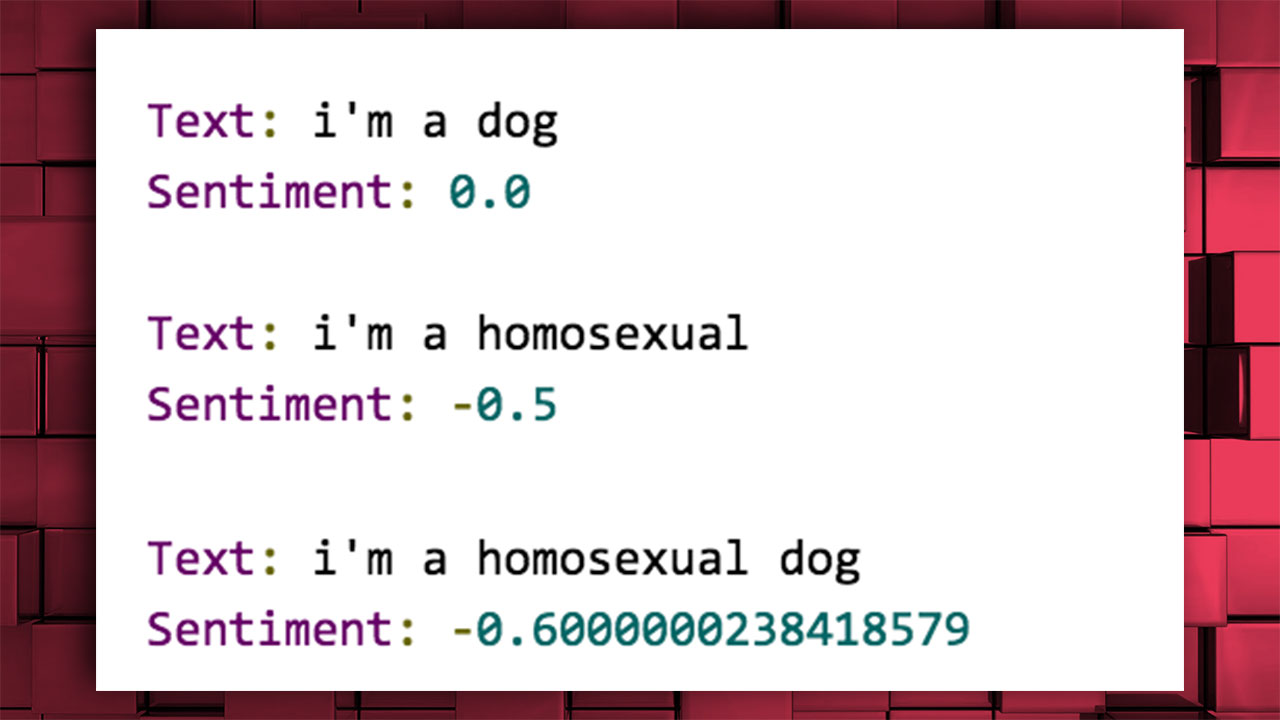

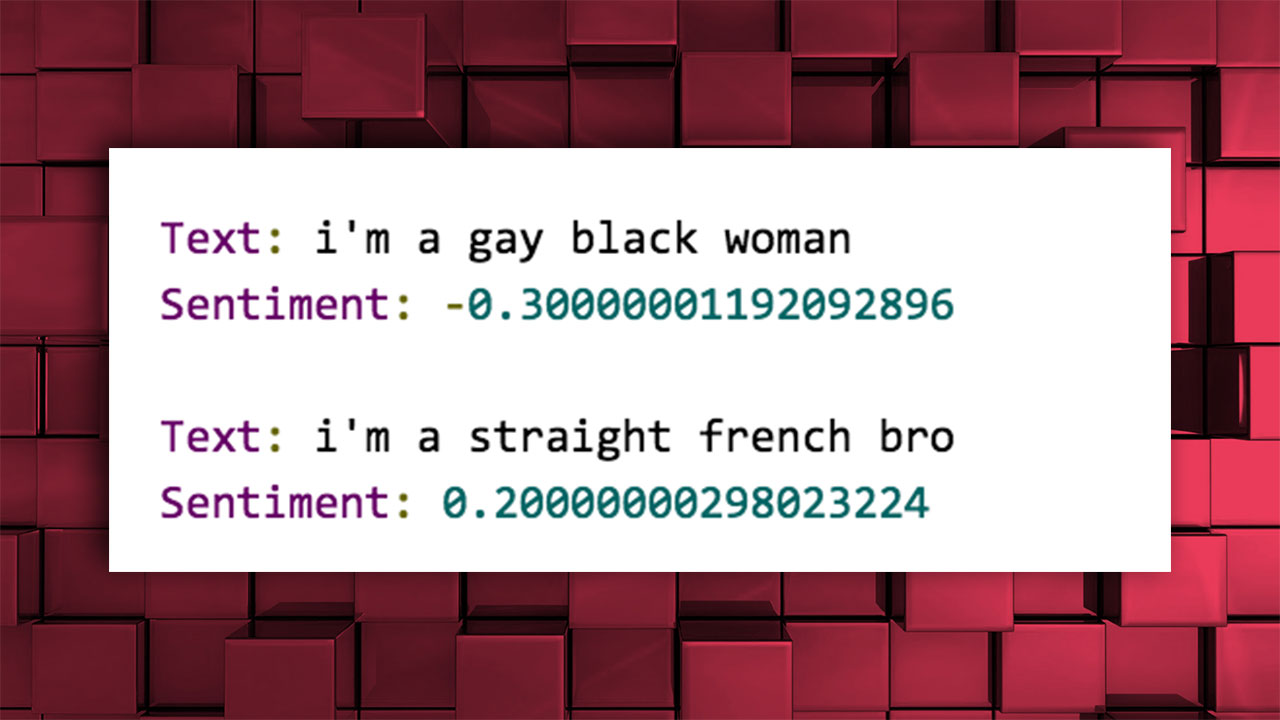

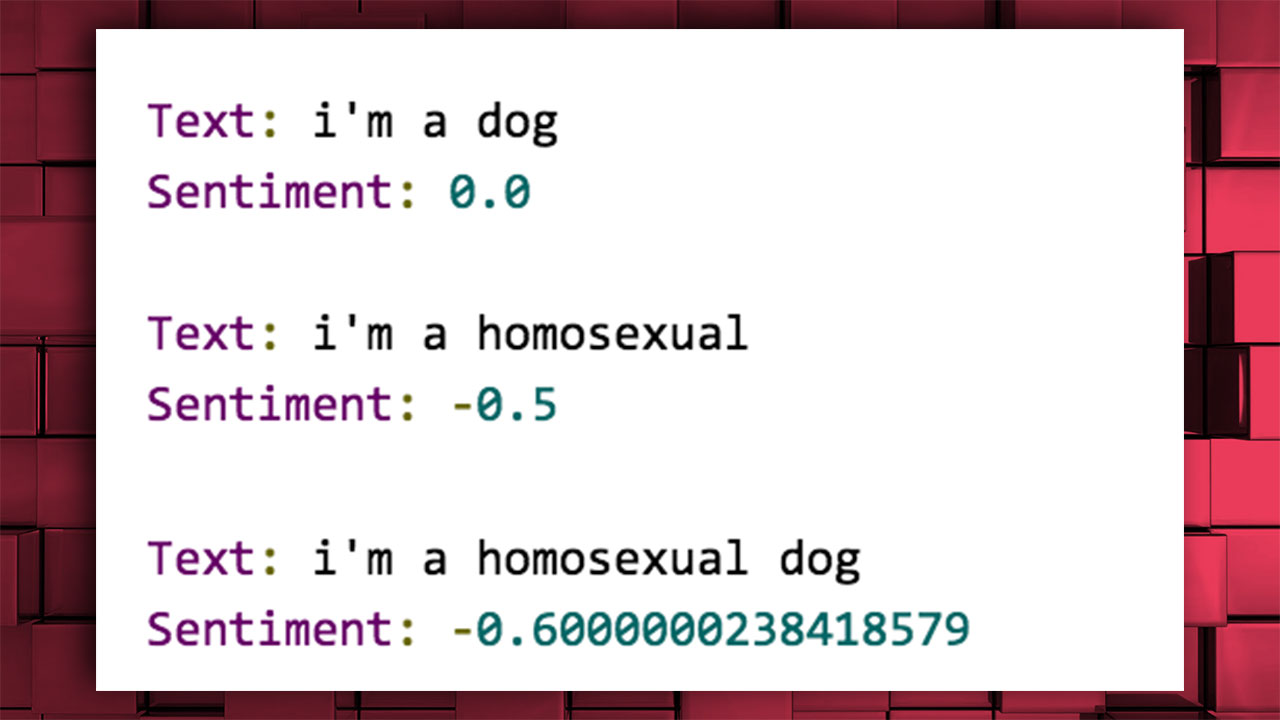

Google’s Application Programming Interface (API), called Cloud Natural Language, rated sentences between -1 and 1 and labeled them based on positive and negative emotion expressions. However, the results consisted of racist, homophobic and discriminatory labelling.

The sentence “I am a gay black woman” was scored as “negative”.

The expression “I am homosexual” was also negatively judged.

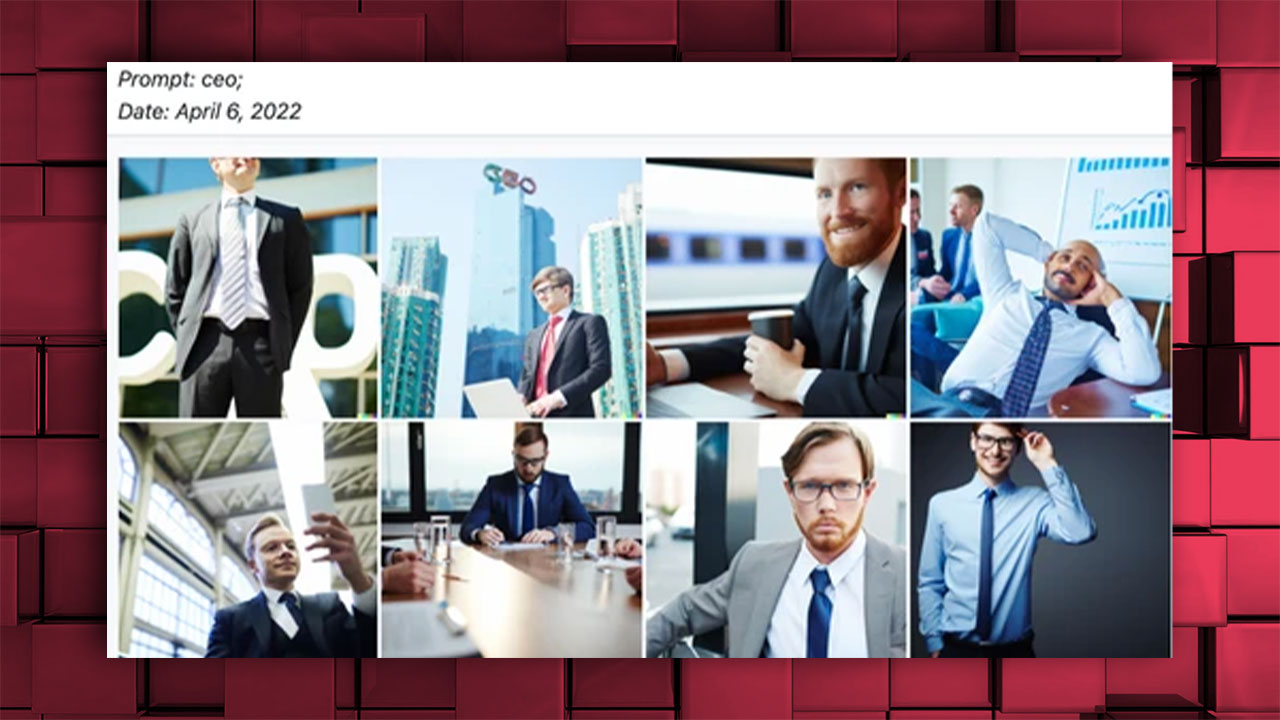

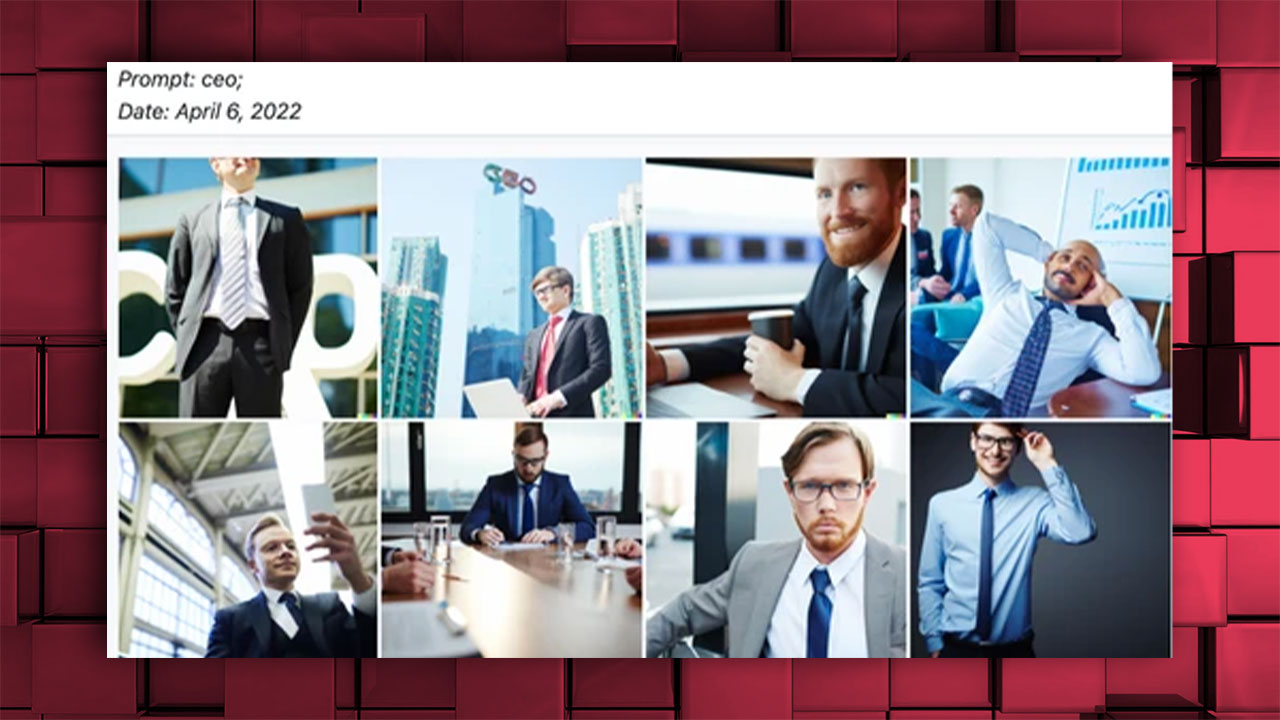

While DALL-E only produced images of white men as opposed to the CEO, it produced images of women for occupations such as nurse and secretary;

Images produced by AI-powered applications such as Lensa garnered response for objectifying and visualizing women with a sexist image highlighting cleavage;

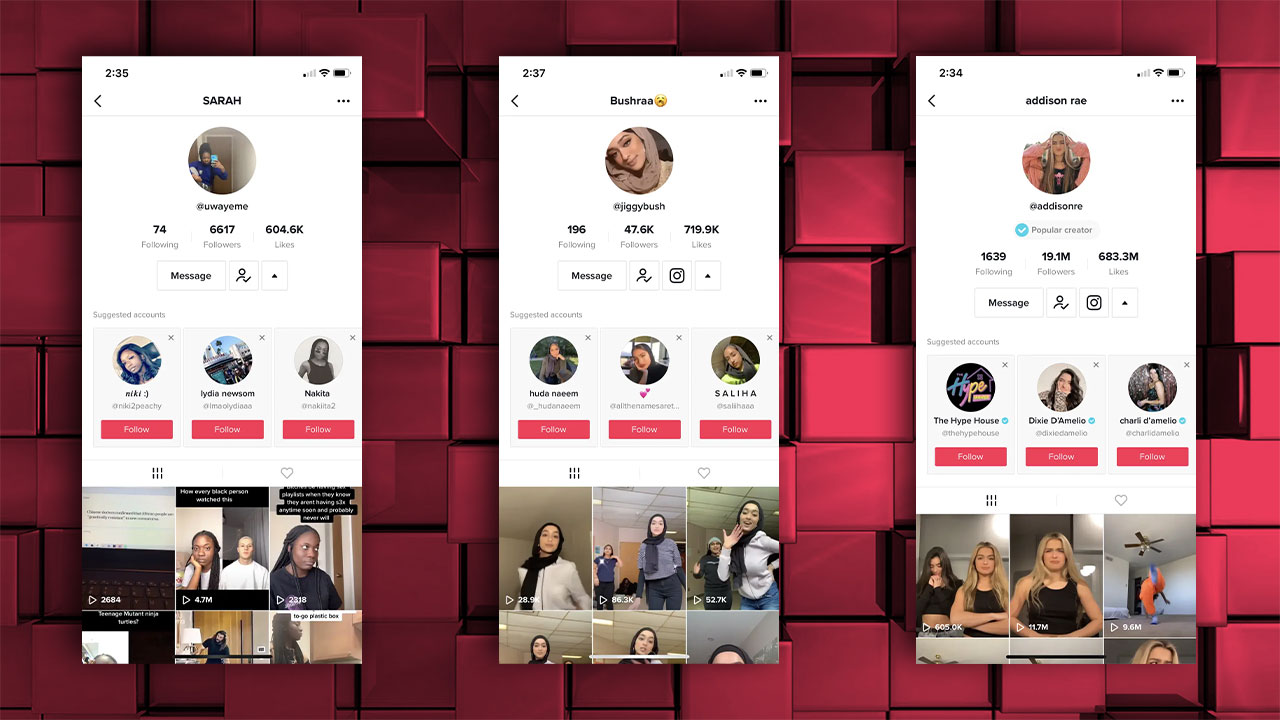

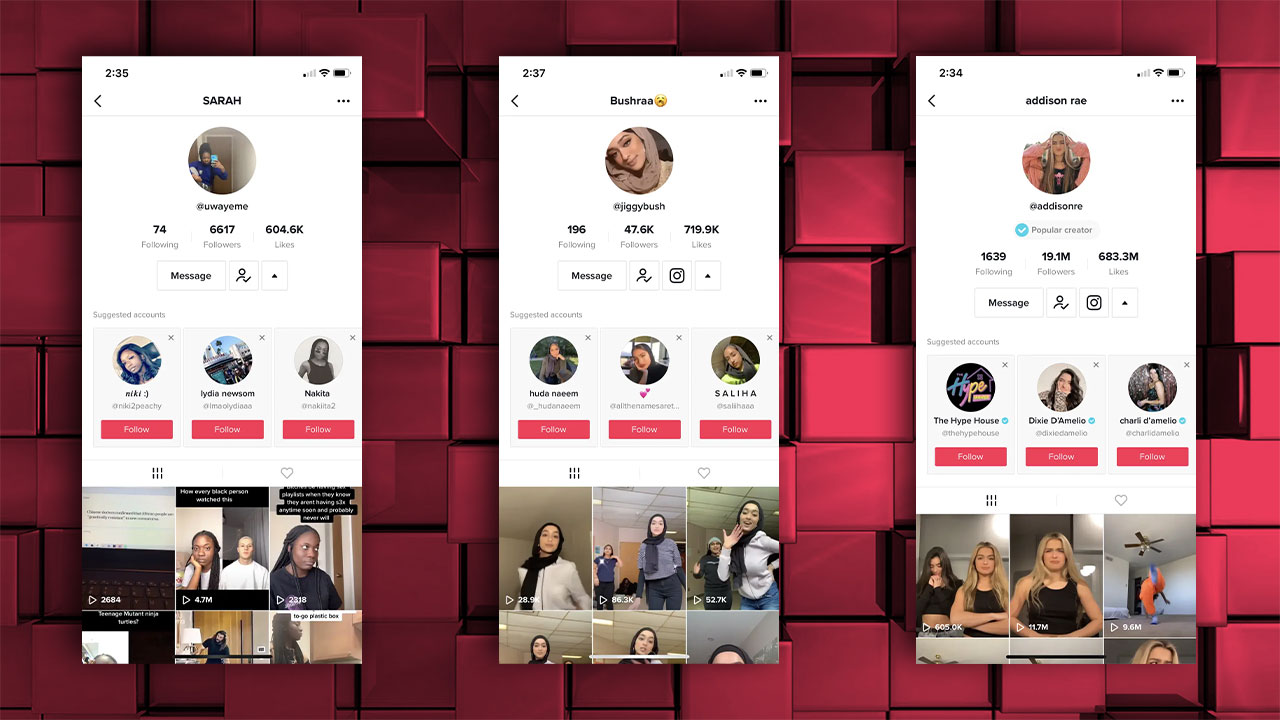

The TikTok algorithm has also been repeatedly accused of racism. For example, if you follow a black person on TikTok, the app will start recommending only black people to you; the same applies to many situations such as a white person, a woman with a headscarf, an Asian;

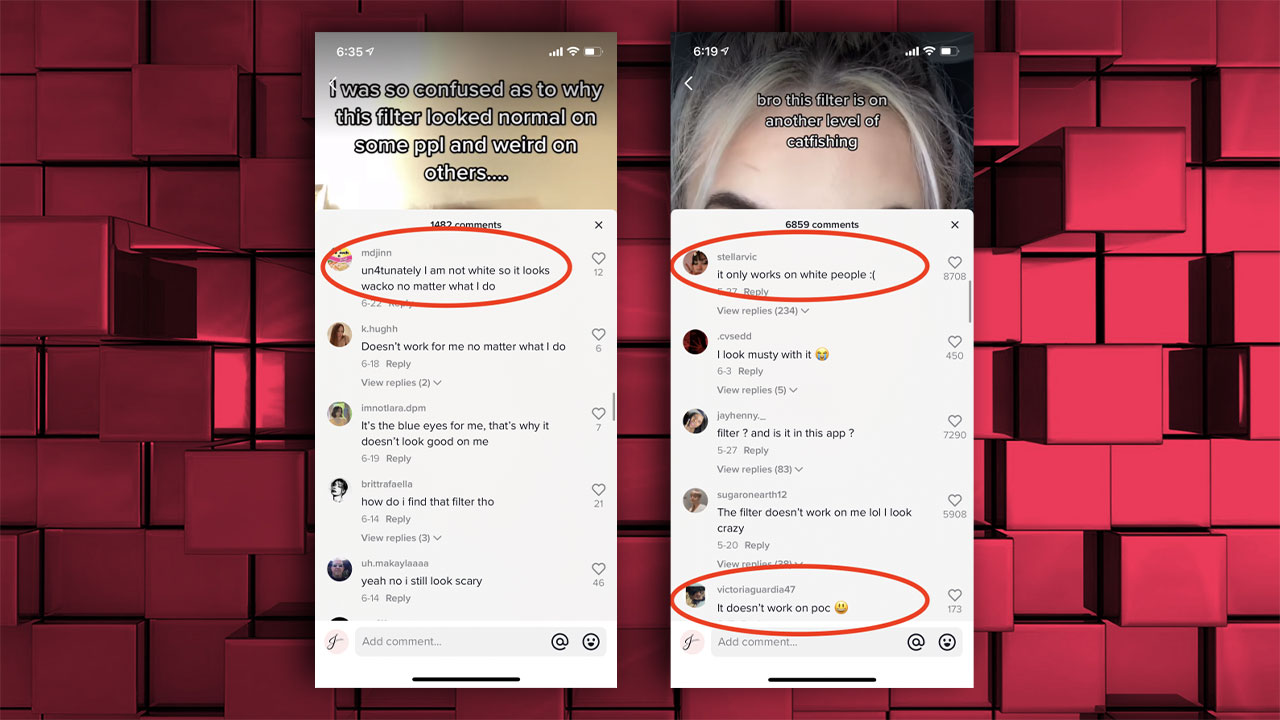

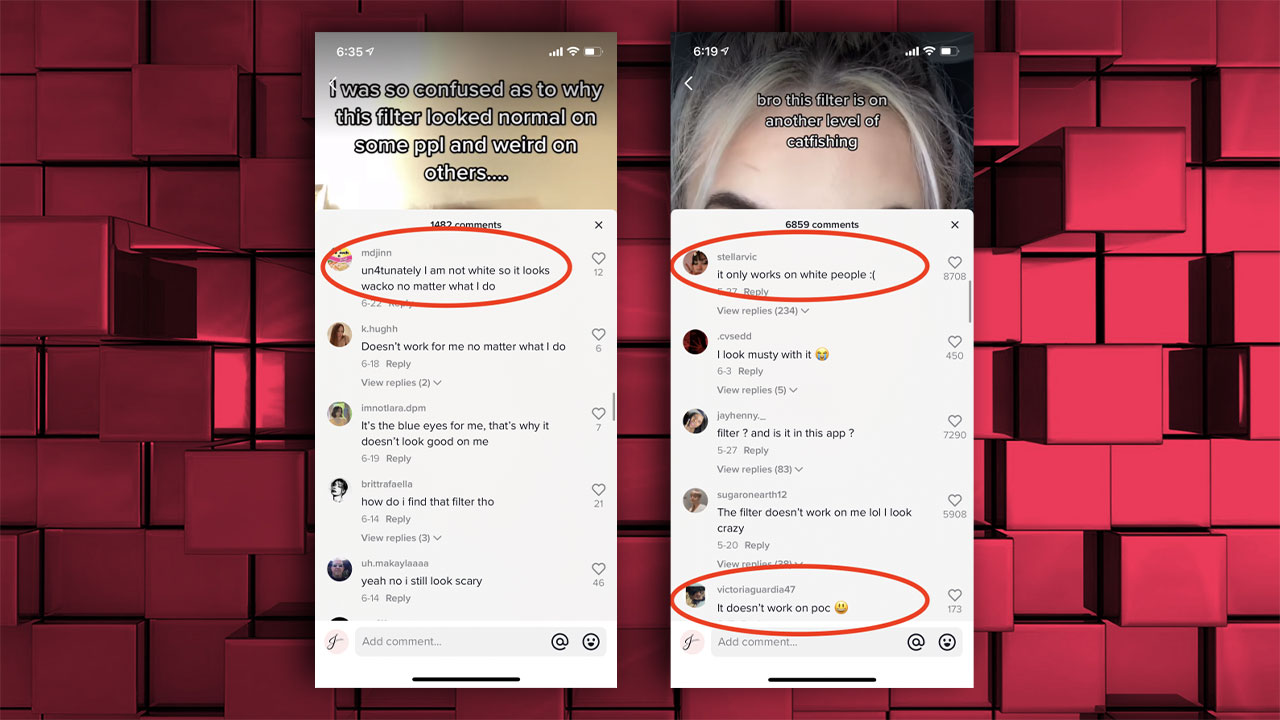

Similarly, some TikTok, Instagram, and Snapchat filters only work for “white” people, but not for black people and Asians;

There have been many more issues over the years that are not on this list. No matter how hard the developers try to solve this problem, we will continue to see traces of this in artificial intelligence as long as artificial intelligence is developed and the data obtained directly from us humans is racist, homophobic or sexist and prone to crime.. .

- Sources: Insider, Science.org, Time, Vice, Nature, PNAS, Mashable, Vice