ChatGPT exposed the data of its users

- March 24, 2023

- 0

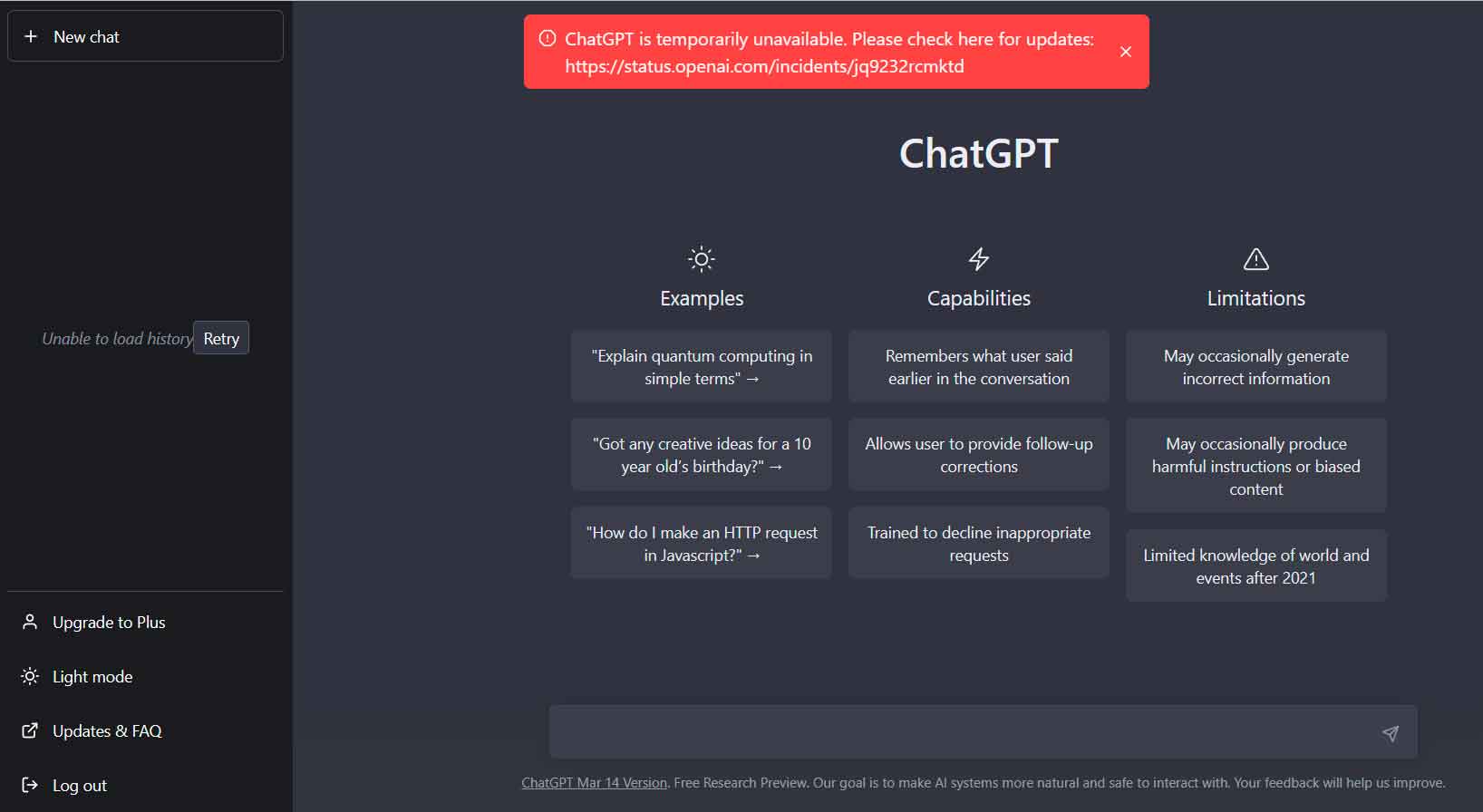

You may remember how we told you Last Monday, ChatGPT experienced a multi-hour outage. Ultimately, the incident was resolved within a day, although some users did not recover

You may remember how we told you Last Monday, ChatGPT experienced a multi-hour outage. Ultimately, the incident was resolved within a day, although some users did not recover

You may remember how we told you Last Monday, ChatGPT experienced a multi-hour outage. Ultimately, the incident was resolved within a day, although some users did not recover their chat history until several hours after the incident was considered resolved. From the information we could see on the incident status page, everything pointed to a bug affecting the chatbot’s functionality, nothing more.

This fall got me thinking about the considerations we need to take into account when integrating an AI model into any aspect of our lives: work/society, study, etc. I’ve lost count of how many times I’ve mentioned how useful these services are and how beneficial they can be for many cases, but I have always kept in mind that we cannot be solely dependent on them, just as we cannot be solely dependent on them. … a smartphone to open the door of the house, because if we are left without a smartphone… we will be left without the possibility to enter the house.

However, as the days went by, we were able to learn that there was much more to what happened on Monday than first met It wasn’t a crash, but a voluntary shutdown. And this is not a rumor or a leak, no, it comes from the official OpenAI blog where they report what happened in an entry that begins by stating the following:

«Earlier this week we took ChatGPT offline due to a bug in the open source library allowed some users to view another active user’s chat history names. It is also possible that the first message of a newly created conversation was visible in someone else’s chat history if both users were active at the same time.»

This in itself is already a rather worrisome privacy issue, but the truth is that it is reduced to nothing when we read further and discover the following:

«Upon further investigation, we also found that the same error could have caused it Inadvertent visibility of payment-related information to 1.2% of ChatGPT Plus subscribers that were active during a certain nine-hour period. In the hours before we took ChatGPT offline on Monday, some users were able to see another active user’s first and last name, email address, billing address, last four digits (only) of credit card number, credit card expiration date, and Credit Cards. Full credit card numbers were never released.»

From this point, OpenAI says the circumstances that had to exist for the user’s information to be exposed are extremely specific, so minimizes the volume of users who may have been affected For this problem. Furthermore, he states that everyone has already been informed of this, sharing a message to reassure them that their safety will not be compromised by what happened.

The problem is that in reality the simple disclosure of personal data already threatens securitybecause if the said data gets disseminated and ends up in the wrong hands, it can be used in spearphishing attacks, so in reality these ChatGPT users, and especially the Plus version users, will have to act with a special suspicion of unexpected communications.

Yes, I have to admit, and this is a positive point that OpenAI informed about the exact causes of the occurrence, as well as measures taken to solve the problem. The root cause is an issue with Redis-py, the Python client for Redis, an in-memory database that stores key-value data and is used by the OpenAI chatbot.

Source: Muy Computer

Donald Salinas is an experienced automobile journalist and writer for Div Bracket. He brings his readers the latest news and developments from the world of automobiles, offering a unique and knowledgeable perspective on the latest trends and innovations in the automotive industry.