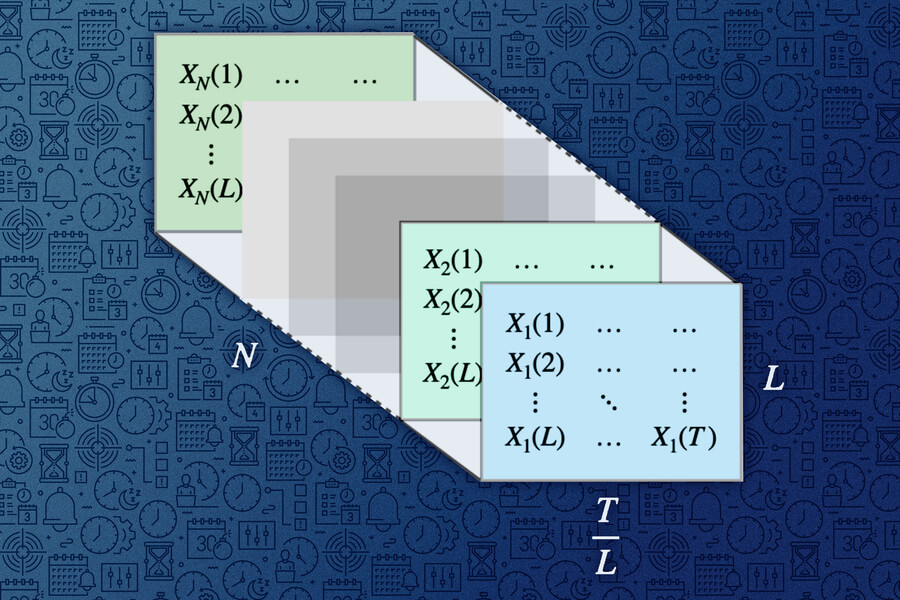

As the researchers explain in a paper currently under review, the AI model, called MinD-Video, was “trained” on public fMRI data (specifically, data from cases where a video was shown while recording a person’s brain activity). saved) and in the advanced model of the Stable Diffusion AI renderer.

Results

Using this combination, the researchers were able to create “high-quality” reconstructions of the videos previously shown to the experiment participants. To do this, they read brain activity data during imaging.

According to the authors, their models were able to recreate these videos with an average of 85% accuracy based on “various semantic and pixel metrics.”

Original and reconstruction / Photo: Chen

Understanding the information hidden in our complex brain activity is a big mystery in cognitive neuroscience. We show that high-quality videos with arbitrary frame rates can be recreated using Mind-Video.

– says the article.

This work builds on researchers’ previous attempts to use artificial intelligence to reproduce images by analyzing brainwaves alone. The new AI video renderings, while not entirely accurate, are pretty impressive overall. At the current stage of technology development, it allows you to capture the main essence of what a person sees, but does not convey all the details. Several comparisons of the original and “reconstructed” videos can be found on the researchers’ website.

- The tech interpreted the video of the jellyfish into a clip of the fish swimming.

- You might also see a fish instead of a video featuring a turtle.

- Video of a crowd walking down a crowded street turned into a similarly crowded scene, but with much brighter colors.

While this research is exciting, we’re still a long way from a future where we can put on a helmet and get a perfectly AI-generated video stream of everything going on around us.

Source: 24 Tv

I’m Maurice Knox, a professional news writer with a focus on science. I work for Div Bracket. My articles cover everything from the latest scientific breakthroughs to advances in technology and medicine. I have a passion for understanding the world around us and helping people stay informed about important developments in science and beyond.